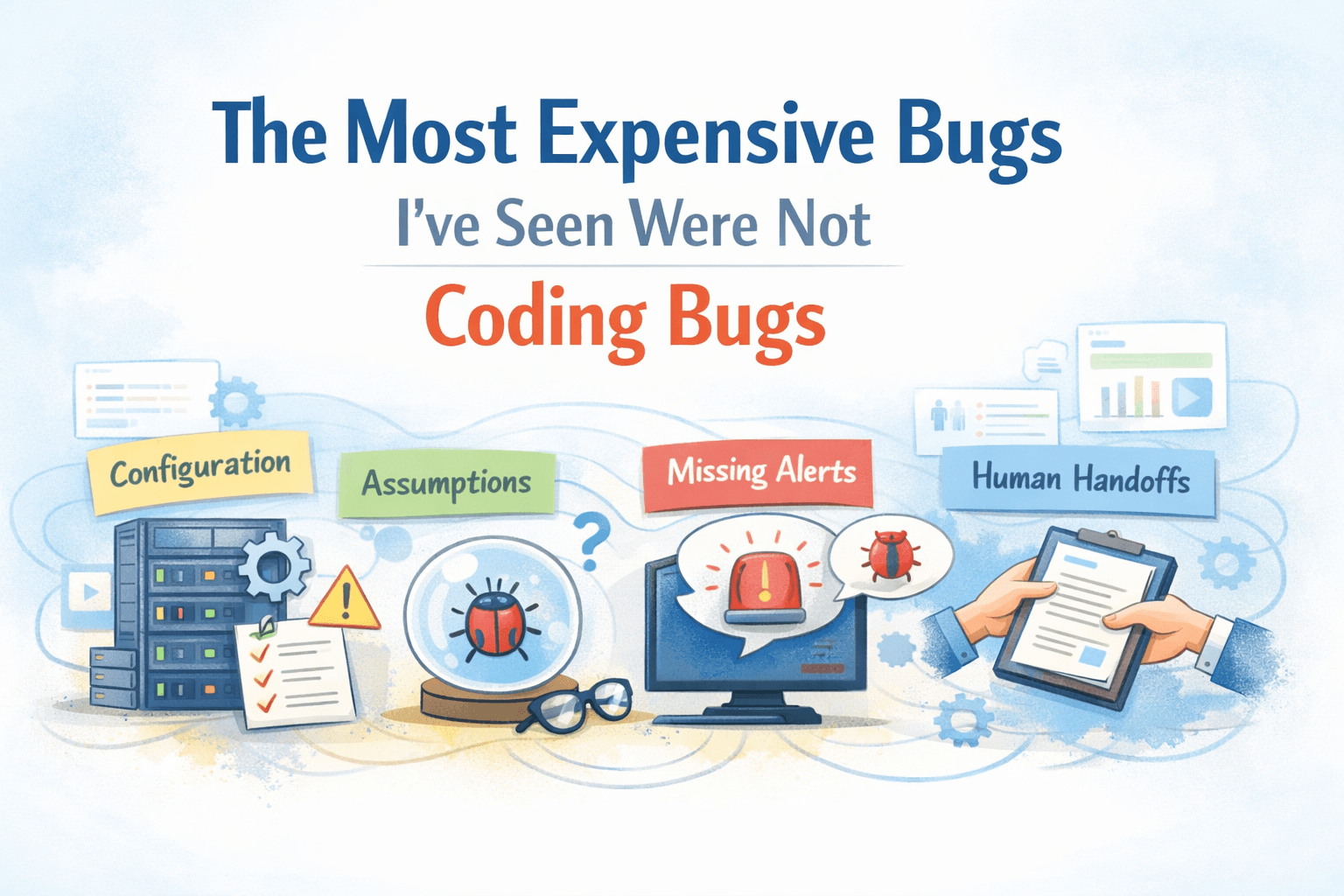

The Most Expensive Bugs I’ve Seen Were Not Coding Bugs

Staff Engineer and technology enthusiast with over a decade of experience in building large-scale distributed systems using Java, Go, and cloud-native technologies. He writes about software engineering, system design, and developer productivity—simplifying complex tech concepts into practical insights for engineers and creators.

After more than a decade in software engineering, I’ve learned something uncomfortable:

The bugs that caused the most damage in production were rarely caused by bad code.

They didn’t come from syntax errors, wrong loops, or missed null checks.

They came from things we don’t like to talk about enough.

Configuration.

Assumptions.

Missing alerts.

Human handoffs.

These bugs don’t crash immediately.

They quietly wait — and then explode at the worst possible moment.

1. Configuration Bugs: When the Code Is Right and Production Is Wrong

Some of the most expensive outages I’ve seen had zero code changes.

The code was correct.

The logic was sound.

Tests passed.

Production still broke.

Real-world example (Fintech)

In a payment system, a timeout configuration for a downstream bank API was reduced from 3 seconds to 800 ms during a “performance tuning” change.

Nothing broke immediately.

But under peak traffic, transactions started timing out intermittently — leading to:

- Duplicate payment attempts

- Inconsistent reconciliation

- Customer complaints about “money deducted but order failed”

No code bug.

Just a config change applied globally.

Configuration bugs are dangerous because they:

- Bypass code review

- Don’t show up in unit tests

- Often change at runtime

At scale, small config mistakes get amplified instantly.

2. Assumptions: The Bug That Lives in Your Head

Assumptions are invisible bugs.

Things like:

- “This API will never return null”

- “Traffic won’t spike at night”

- “This service is always fast”

- “This feature flag will never be disabled”

Real-world example (Ads systems)

In an ads-serving system, a component assumed:

“There will always be at least one eligible ad.”

During a misconfigured campaign rollout, zero ads were eligible.

The result?

- Request retries spiked

- Latency exploded

- Entire ad slots went blank during peak traffic

The system didn’t fail gracefully — because the assumption was never documented or challenged.

Production doesn’t care what you assumed.

3. Missing Alerts: The Bug You Find Too Late

One of the most painful postmortems usually contains this line:

“The issue started at 2:17 AM but was detected at 8:45 AM.”

That gap is not a coding problem.

It’s an observability failure.

Real-world example (E-commerce)

In a large e-commerce platform:

- Order creation started failing for a specific payment method

- Error rate slowly climbed from 0.1% to 8%

- No alert fired because thresholds were set too high

By the time humans noticed:

- Thousands of failed checkouts had occurred

- Revenue was already lost

- Customer trust took a hit

A bug that lasts 5 minutes is a hiccup.

A bug that lasts 6 hours is an incident.

The difference is almost always alerts.

4. Human Handoffs: Where Responsibility Gets Lost

Some bugs don’t belong to code or systems.

They belong to process gaps.

Examples:

- Team A deploys, Team B owns production

- Infra is managed by one team, app by another

- A fix is merged, but never deployed

- On-call assumes someone else is watching dashboards

Real-world example (All large orgs)

During a production incident:

- The application team waited for infra changes

- Infra waited for confirmation from app team

- No one owned the rollback decision

The system stayed broken longer than necessary — not due to complexity, but ambiguity.

When no one clearly owns the problem, the problem owns everyone.

Why These Bugs Are So Expensive

Coding bugs are usually:

- Local

- Reproducible

- Fixable with a patch

Non-coding bugs are:

- Systemic

- Hard to detect

- Slow to debug

- Costly in downtime and trust

They don’t fail fast.

They fail silently — and then catastrophically.

How Experienced Teams Reduce These Failures (Tech + Process)

You can’t eliminate these bugs completely.

But you can make them rare, visible, and recoverable.

1. Treat Configuration as Code

If configuration can break production, it deserves the same discipline as code.

What works in practice:

- Version-controlled config

- Environment parity (staging ≈ production)

- Explicit defaults

- Config validation at startup

- Safe rollout mechanisms for config changes

If changing a config is scarier than deploying code, your system is lying to you.

2. Make Assumptions Explicit — Then Break Them

Assumptions aren’t evil.

Hidden assumptions are.

Idiomatic practices:

- Document assumptions in code and design docs

- Validate inputs aggressively at service boundaries

- Fail fast when assumptions break

- Write tests that intentionally violate assumptions

Good systems assume failure, not perfection.

3. Design Alerts for Humans, Not Dashboards

Dashboards are passive.

Alerts are promises.

Good alerts answer:

- What broke?

- How bad is it?

- What should I do right now?

Rules that work:

- Alert on symptoms, not raw metrics

- Prefer fewer alerts over noisy alerts

- Tie alerts to clear ownership

- Include runbook links

- Test alerts during working hours

If an alert wakes someone up, it must be actionable.

4. Define Ownership Before Incidents, Not During Them

Human handoff bugs disappear when ownership is clear.

What helps:

- Clear service ownership

- Named on-call rotations

- Blameless postmortems

- Explicit escalation paths

- Regular incident drills

Most production failures are coordination problems, not technical ones.

5. Build Systems That Degrade Gracefully

Perfect systems don’t exist.

Resilient systems do.

Idiomatic resilience patterns:

- Timeouts everywhere

- Circuit breakers for dependencies

- Rate limits to protect downstream systems

- Feature flags for safe rollback

- Partial success over total failure

A slow system that stays up is often better than a fast system that crashes.

What Changes as You Get More Senior

Junior engineers ask:

“Will this work?”

Senior engineers ask:

“How will this fail?”

Staff and principal engineers ask:

“How will we know it’s failing — and who owns the fix?”

That evolution has very little to do with syntax.

It has everything to do with systems thinking.

Final Thoughts

The most expensive bugs I’ve seen weren’t written in code.

They were written in:

- YAML files

- Mental assumptions

- Missing alerts

- Slack handoffs

- Unclear ownership

And the uncomfortable truth is this:

Most production bugs are social and operational problems wearing technical disguises.

If you focus only on code, production will eventually teach you the rest — the hard way.

👋 If this resonated

- Share the most painful non-coding bug you’ve seen

- These stories help teams avoid repeating the same mistakes